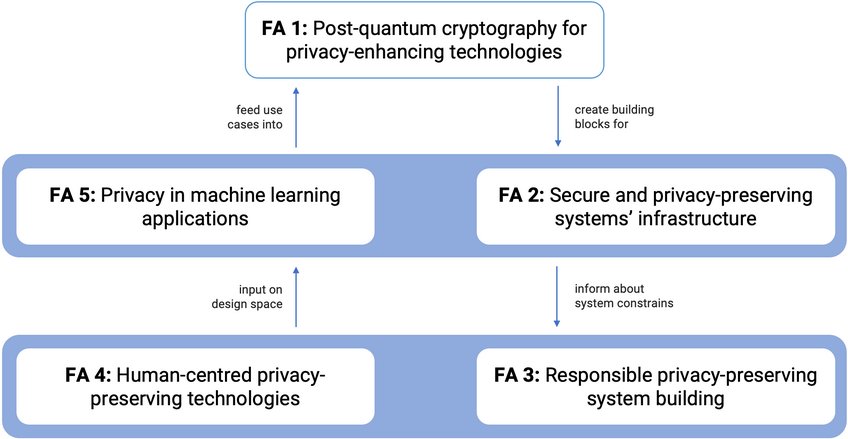

Focus Areas

FA 1: Post-quantum cryptography for privacy-enhancing technologies

Involved PIs: Eike Kiltz, Peter Schwabe, Carmela Troncoso

A main building block to create privacy-enhancing technologies (PETs) and privacy-preserving systems is cryptography. The cryptography community is currently developing post-quantum cryptography: cryptographic primitives that can resist an attacker equipped with a large universal quantum computer. The post-quantum effort is only starting and it is mostly focused on developing the next generation of asymmetric cryptography. Driven by an open standardization effort by NIST, this migration mostly focuses on key agreement protocols and digital signatures. So far, this effort has produced 3 standards with participation from PI Schwabe and PI Kiltz, namely of the key-encapsulation scheme ML-KEM and the signature schemes ML-DSA and SLH-DSA. While migrating to post-quantum key agreement and signatures is an important step towards creating end-toend encrypted channels secure against quantum-equipped attackers, securing communications is not sufficient to guarantee privacy in the digital world, as the private guarantees need to also exist at the application layer. A wide range of privacy-preserving cryptographic primitives is currently available for this purpose. These primitives allow to perform generic computations (e.g., secure multiparty computation homomorphic encryption) or specific operations (e.g., private set intersection or private information retrieval) on encrypted data; as well as to prove statements on secrets without revealing the secret (e.g., anonymous credentials). Yet, most of these primitives, with the notable exception of homomorphic encryption, are typically based on cryptographic operations that would not provide post-quantum privacy protection in the presence of attackers equipped with a large universal quantum computer. Moreover, the techniques used to advance asymmetric cryptography do not directly apply to the above-mentioned privacy-preserving cryptographic primitives. For most of them, there exist proposals based either on lattice assumptions (just like ML-KEM and ML-DSA) or on properties of hash functions (just like SLH-DSA) that are believed to be secure against quantum attackers, but whose privacy protection is still not well-understood. The goal of this Focus Area is to develop the next generation of PETs-oriented cryptographic primitives. These must enable the functionalities necessary to provide privacy at the application layer, and at the same time provide strong, formally proven post-quantum security guarantees. To ensure the wide applicability of our results, we will put special attention in creating cryptography primitives that can be efficiently and securely implemented so that they can be deployed in a wide range of real-time applications and on constrained devices.

A main building block to create privacy-enhancing technologies (PETs) and privacy-preserving systems is cryptography. The cryptography community is currently developing post-quantum cryptography: cryptographic primitives that can resist an attacker equipped with a large universal quantum computer. The post-quantum effort is only starting and it is mostly focused on developing the next generation of asymmetric cryptography. Driven by an open standardization effort by NIST, this migration mostly focuses on key agreement protocols and digital signatures. So far, this effort has produced 3 standards with participation from PI Schwabe and PI Kiltz, namely of the key-encapsulation scheme ML-KEM and the signature schemes ML-DSA and SLH-DSA. While migrating to post-quantum key agreement and signatures is an important step towards creating end-toend encrypted channels secure against quantum-equipped attackers, securing communications is not sufficient to guarantee privacy in the digital world, as the private guarantees need to also exist at the application layer. A wide range of privacy-preserving cryptographic primitives is currently available for this purpose. These primitives allow to perform generic computations (e.g., secure multiparty computation homomorphic encryption) or specific operations (e.g., private set intersection or private information retrieval) on encrypted data; as well as to prove statements on secrets without revealing the secret (e.g., anonymous credentials). Yet, most of these primitives, with the notable exception of homomorphic encryption, are typically based on cryptographic operations that would not provide post-quantum privacy protection in the presence of attackers equipped with a large universal quantum computer. Moreover, the techniques used to advance asymmetric cryptography do not directly apply to the above-mentioned privacy-preserving cryptographic primitives. For most of them, there exist proposals based either on lattice assumptions (just like ML-KEM and ML-DSA) or on properties of hash functions (just like SLH-DSA) that are believed to be secure against quantum attackers, but whose privacy protection is still not well-understood. The goal of this Focus Area is to develop the next generation of PETs-oriented cryptographic primitives. These must enable the functionalities necessary to provide privacy at the application layer, and at the same time provide strong, formally proven post-quantum security guarantees. To ensure the wide applicability of our results, we will put special attention in creating cryptography primitives that can be efficiently and securely implemented so that they can be deployed in a wide range of real-time applications and on constrained devices.

FA 2: Secure and privacy-preserving systems’ infrastructure

Involved PIs: Jana Hofmann, Thorsten Holz, Ghassan Karame, Katharina Kohls, Veelasha Moonsamy, Clara Schneidewind, Peter Schwabe, Yuval Yarom

Privacy-preserving systems do not exist in a void: even if cryptography can protect data in transit, at rest, and during processing, these guarantees only hold if the underlying infrastructure is designed to support them. The goal of this Focus Area is to develop privacy-mindful infrastructures that provide high assurance that the privacy properties achieved at the application layer will not be undermined by leaks due to system architecture choices, including the operative system and the network. Research in this area will span two directions. In a first direction, we aim to ensure that the computational infrastructure (e.g., processors and the operating system) on which privacy-preserving systems are based does not leak any information that could break the high-level privacy properties of these systems. This includes safeguarding operating systems from vulnerabilities and side-channel attacks, as well as providing strong isolation between processes so that privacy-preserving computations can be securely executed even in adversarial environments, such as the cloud. In parallel, this Focus Area will investigate ways to prevent private information from leaking at the network and physical layers. Our aim is to ensure that network protocols do not undermine the security of application-level privacy mechanisms, and that signals at the physical layer cannot be used to infer sensitive, private information. Research in this Focus Area will cover the design of novel secure hardware and software architectures, as well as mechanisms to improve the privacy of legacy systems. The second direction focuses on strengthening the security of decentralized systems, which are a main building block of privacy-preserving applications. The Focus Area will foster research on the interplay between security, availability, and privacy in decentralized systems, with the goal of characterizing the region of feasibility where strong privacy protections can co-exist with other desirable properties. In particular, the Focus Area will consider different decentralized privacy-preserving implementations, from purely software-based solutions for confidentiality (e.g., using zero-knowledge proofs) to hardware-based solutions, such as using trusted execution environments.

Privacy-preserving systems do not exist in a void: even if cryptography can protect data in transit, at rest, and during processing, these guarantees only hold if the underlying infrastructure is designed to support them. The goal of this Focus Area is to develop privacy-mindful infrastructures that provide high assurance that the privacy properties achieved at the application layer will not be undermined by leaks due to system architecture choices, including the operative system and the network. Research in this area will span two directions. In a first direction, we aim to ensure that the computational infrastructure (e.g., processors and the operating system) on which privacy-preserving systems are based does not leak any information that could break the high-level privacy properties of these systems. This includes safeguarding operating systems from vulnerabilities and side-channel attacks, as well as providing strong isolation between processes so that privacy-preserving computations can be securely executed even in adversarial environments, such as the cloud. In parallel, this Focus Area will investigate ways to prevent private information from leaking at the network and physical layers. Our aim is to ensure that network protocols do not undermine the security of application-level privacy mechanisms, and that signals at the physical layer cannot be used to infer sensitive, private information. Research in this Focus Area will cover the design of novel secure hardware and software architectures, as well as mechanisms to improve the privacy of legacy systems. The second direction focuses on strengthening the security of decentralized systems, which are a main building block of privacy-preserving applications. The Focus Area will foster research on the interplay between security, availability, and privacy in decentralized systems, with the goal of characterizing the region of feasibility where strong privacy protections can co-exist with other desirable properties. In particular, the Focus Area will consider different decentralized privacy-preserving implementations, from purely software-based solutions for confidentiality (e.g., using zero-knowledge proofs) to hardware-based solutions, such as using trusted execution environments.

FA 3: Responsible privacy-preserving system building

Involved PIs: Asia Biega, Karola Marky, Veelasha Moonsamy, Carmela Troncoso, Yixin Zou

Enabling privacy-preserving solutions alone is not enough to eliminate all possible risks that result from interactions with online services: For instance, privacy-preserving advertisement (a) can still allow the creation of targeted advertisements without collecting user profiles, and (b) does not reveal personal data to trackers and advertisers. However, the resulting targeted advertisements can still be biased which in turn can expose users to potentially discriminating content (e.g., receiving ads for jobs with a too low pay), polarizing information (e.g., emotional content creating echo chambers) or lower the user’s resilience (e.g., only highly desirable content is received that does not challenge a user’s ideas). Consequently, solutions in the form of algorithms, mechanisms, and tools to mitigate these risks are required to ultimately ensure that users can maintain their identity and sovereignty online. To develop these solutions, the socio-legal environment in which privacy-preserving technologies are deployed as well as their scope requires careful consideration. To realize that, the Focus Area will reshape how privacy-preserving solutions are designed and integrated into online services to move away from solely technically ensuring privacy to additionally prevent that solutions interfere with the freedom of individuals and risk mitigation. To enable this change, research in this Focus Area takes a holistic view by combining system design with an interdisciplinary approach that considers both technical developments and their deployment context. For example, this Focus Area plans research that bridges the gap between software practices, legal compliance, and human aspects. In doing so, we will also investigate new technical definitions to represent legal principles (e.g., purpose limitation often obviated in technical system design), and to use these novel definitions to equip software developers with tools to produce code that, by default, is compliant with privacy-relevant legislation and supports human interaction. We will interact with relevant stakeholders such as developers, legal experts, policy makers, and end users to ensure that the necessary privacy requirements and constraints are captured at various system development stages. In terms of context, the research in this Focus Area will pay particular attention to how the pervasiveness of technology and the applications it enables change the relationship between privacy and safety in scenarios where the constraints and norms of the analogue world cannot be applied. Virtual reality forms an example of such an application where the boundary between virtual and physical bystanders is blurry. Further, online (social) platforms form another example as they enable exchanges of content in ways that are not possible in the physical world (e.g., distribution of harmful material, such as non-consensual (sexual) images). This focus Area will investigate risks unique to these new contexts to develop targeted risk-prevention mechanisms.

Enabling privacy-preserving solutions alone is not enough to eliminate all possible risks that result from interactions with online services: For instance, privacy-preserving advertisement (a) can still allow the creation of targeted advertisements without collecting user profiles, and (b) does not reveal personal data to trackers and advertisers. However, the resulting targeted advertisements can still be biased which in turn can expose users to potentially discriminating content (e.g., receiving ads for jobs with a too low pay), polarizing information (e.g., emotional content creating echo chambers) or lower the user’s resilience (e.g., only highly desirable content is received that does not challenge a user’s ideas). Consequently, solutions in the form of algorithms, mechanisms, and tools to mitigate these risks are required to ultimately ensure that users can maintain their identity and sovereignty online. To develop these solutions, the socio-legal environment in which privacy-preserving technologies are deployed as well as their scope requires careful consideration. To realize that, the Focus Area will reshape how privacy-preserving solutions are designed and integrated into online services to move away from solely technically ensuring privacy to additionally prevent that solutions interfere with the freedom of individuals and risk mitigation. To enable this change, research in this Focus Area takes a holistic view by combining system design with an interdisciplinary approach that considers both technical developments and their deployment context. For example, this Focus Area plans research that bridges the gap between software practices, legal compliance, and human aspects. In doing so, we will also investigate new technical definitions to represent legal principles (e.g., purpose limitation often obviated in technical system design), and to use these novel definitions to equip software developers with tools to produce code that, by default, is compliant with privacy-relevant legislation and supports human interaction. We will interact with relevant stakeholders such as developers, legal experts, policy makers, and end users to ensure that the necessary privacy requirements and constraints are captured at various system development stages. In terms of context, the research in this Focus Area will pay particular attention to how the pervasiveness of technology and the applications it enables change the relationship between privacy and safety in scenarios where the constraints and norms of the analogue world cannot be applied. Virtual reality forms an example of such an application where the boundary between virtual and physical bystanders is blurry. Further, online (social) platforms form another example as they enable exchanges of content in ways that are not possible in the physical world (e.g., distribution of harmful material, such as non-consensual (sexual) images). This focus Area will investigate risks unique to these new contexts to develop targeted risk-prevention mechanisms.

FA 4: Human-centered privacy-preserving technologies

Involved PIs: Karola Marky, Veelasha Moonsamy, Yixin Zou

Privacy mechanisms, including those designed within this IMPRS, are only effective if they can be understood and used by those who interact with them. This includes end users of digital systems, as well as developers who implement privacy mechanisms. However, current privacy mechanisms often fall short: They are typically (a) realized as sets of complex settings that have to be configured in each and every digital system (e.g., social networks, apps, or browsers), (b) counter-intuitive, as they do not match processes well-known in the offline world (e.g., determining who can (not) listen to a conversation), and (c) poorly integrated into the daily routines of end users (e.g., cookie banners that frustrate rather than help users). Further, current privacy mechanisms only poorly reflect the unique needs of at-risk user groups, including people with disabilities or older adults, leaving their privacy and security needs underserved. Research in this Focus Area will improve the current state of privacy mechanisms by considering three complementary areas: (1) creating more inclusive and accessible mechanisms, (2) designing easy-to-understand mechanisms, that (3) integrate well in the routines of different end user groups. The research on inclusion and accessibility will focus on at-risk user groups (e.g., hard-of-hearing people or older adults), identifying their unique needs, and considering them in mechanism and interface design. Research considering easy-to-use mechanisms will specifically consider the privacy risks associated with novel interfaces (e.g., AI-based systems or agents) and data donations (e.g., medical data) to find a balance between required end-user education, automation, and privacy-by-design. Finally, the research on integration aims to improve the integration of the mechanisms in daily routines considering the end user groups detailed above but also the developers who require assistance and knowledge in creating privacy-preserving, context-dependent systems.

Privacy mechanisms, including those designed within this IMPRS, are only effective if they can be understood and used by those who interact with them. This includes end users of digital systems, as well as developers who implement privacy mechanisms. However, current privacy mechanisms often fall short: They are typically (a) realized as sets of complex settings that have to be configured in each and every digital system (e.g., social networks, apps, or browsers), (b) counter-intuitive, as they do not match processes well-known in the offline world (e.g., determining who can (not) listen to a conversation), and (c) poorly integrated into the daily routines of end users (e.g., cookie banners that frustrate rather than help users). Further, current privacy mechanisms only poorly reflect the unique needs of at-risk user groups, including people with disabilities or older adults, leaving their privacy and security needs underserved. Research in this Focus Area will improve the current state of privacy mechanisms by considering three complementary areas: (1) creating more inclusive and accessible mechanisms, (2) designing easy-to-understand mechanisms, that (3) integrate well in the routines of different end user groups. The research on inclusion and accessibility will focus on at-risk user groups (e.g., hard-of-hearing people or older adults), identifying their unique needs, and considering them in mechanism and interface design. Research considering easy-to-use mechanisms will specifically consider the privacy risks associated with novel interfaces (e.g., AI-based systems or agents) and data donations (e.g., medical data) to find a balance between required end-user education, automation, and privacy-by-design. Finally, the research on integration aims to improve the integration of the mechanisms in daily routines considering the end user groups detailed above but also the developers who require assistance and knowledge in creating privacy-preserving, context-dependent systems.

FA 5: Privacy in machine learning applications

Involved PIs: Thorsten Holz, Ghassan Karame, Veelasha Moonsamy, Carmela Troncoso, Bilal Zafar

The integration of machine learning in applications brings a wide range of security and privacy risks. Machinelearning models are susceptible to attacks that can make them provide arbitrary responses – which can mislead the model to make harmful decisions, e.g., if inputs to machine-learning systems used by self-driving vehicles are manipulated; or that can make them leak private information about samples in the training data – which can include data as sensitive as health information in the case of medical applications. These risks are exacerbated by the advent of Generative AI (GenAI). GenAI can interact with surrounding systems in novel ways (e.g., via agentic workflows), and they are much widely adopted than previous AI, and thus security and privacy issues can have much greater impact. Moreover, because these models are much more complex than more traditional machine-learning models, our understanding of their behavior and the risk they bring is very limited. This Focus Area aims to enable the seamless integration of privacy protections into machine-learning models, allowing them to be deployed while mitigating the aforementioned risks. First, we pursue foundational research to improve our understanding of the inner workings of models, developing explainability and interpretability methods targeted specifically to identify what makes models vulnerable to privacy attacks. Second, we investigate privacy protection techniques across the model life cycle, ranging from training-time measures, such as differential privacy or data cleaning, to post-hoc protections that can be applied once the model is deployed, such as filtering queries identified as potentially malicious. Finally, we explore the interplay between privacy and other desirable properties of machine-learning-based systems such as robustness, explainability, or fairness, in order to understand which of these properties are compatible or when enforcing one property is detrimental to other protections.

The integration of machine learning in applications brings a wide range of security and privacy risks. Machinelearning models are susceptible to attacks that can make them provide arbitrary responses – which can mislead the model to make harmful decisions, e.g., if inputs to machine-learning systems used by self-driving vehicles are manipulated; or that can make them leak private information about samples in the training data – which can include data as sensitive as health information in the case of medical applications. These risks are exacerbated by the advent of Generative AI (GenAI). GenAI can interact with surrounding systems in novel ways (e.g., via agentic workflows), and they are much widely adopted than previous AI, and thus security and privacy issues can have much greater impact. Moreover, because these models are much more complex than more traditional machine-learning models, our understanding of their behavior and the risk they bring is very limited. This Focus Area aims to enable the seamless integration of privacy protections into machine-learning models, allowing them to be deployed while mitigating the aforementioned risks. First, we pursue foundational research to improve our understanding of the inner workings of models, developing explainability and interpretability methods targeted specifically to identify what makes models vulnerable to privacy attacks. Second, we investigate privacy protection techniques across the model life cycle, ranging from training-time measures, such as differential privacy or data cleaning, to post-hoc protections that can be applied once the model is deployed, such as filtering queries identified as potentially malicious. Finally, we explore the interplay between privacy and other desirable properties of machine-learning-based systems such as robustness, explainability, or fairness, in order to understand which of these properties are compatible or when enforcing one property is detrimental to other protections.